What does it mean to define AI hallucination in simple terms? It is when an AI system generates information that sounds confident and polished, but is actually wrong, invented, or unsupported. In other words, the model is making things up while sounding certain.

That matters because AI tools are now used for research, content drafts, customer support, and even decision-making. If you don’t understand hallucinations, it is easy to trust output that looks believable but contains mistakes.

What Is AI Hallucination?

AI hallucination is a term used to describe outputs from an AI model that are factually incorrect, misleading, or fabricated. The key problem is not just that the answer is wrong, it is that the answer often looks credible enough to pass a quick glance.

This happens with chatbots, image models, and other generative systems. A model may invent a source, misstate a date, or combine real details in a way that creates a false conclusion.

How To Define AI Hallucination In Plain English

If you want the shortest possible answer, here it is: AI hallucination means an AI says something that is not true, even though it sounds true.

A few simple examples:

- A chatbot gives a fake book title by a real author.

- An AI cites a study that does not exist.

- A model confidently explains a legal rule incorrectly.

- A system describes a person, place, or event with made-up details.

The mistake is not always random. Often, the model is trying to predict the most likely next words rather than verify facts.

Why AI Hallucinations Happen

AI models are built to generate language patterns, not to think like a human expert with grounded knowledge. They learn from large datasets and then predict what text should come next based on context.

That process can produce useful answers, but it also creates room for error. Hallucinations often happen when the model:

- Lacks enough information

- Faces a vague or ambiguous prompt

- Tries to answer anyway instead of admitting uncertainty

- Mixes patterns from different sources

- Extends a plausible guess into a false claim

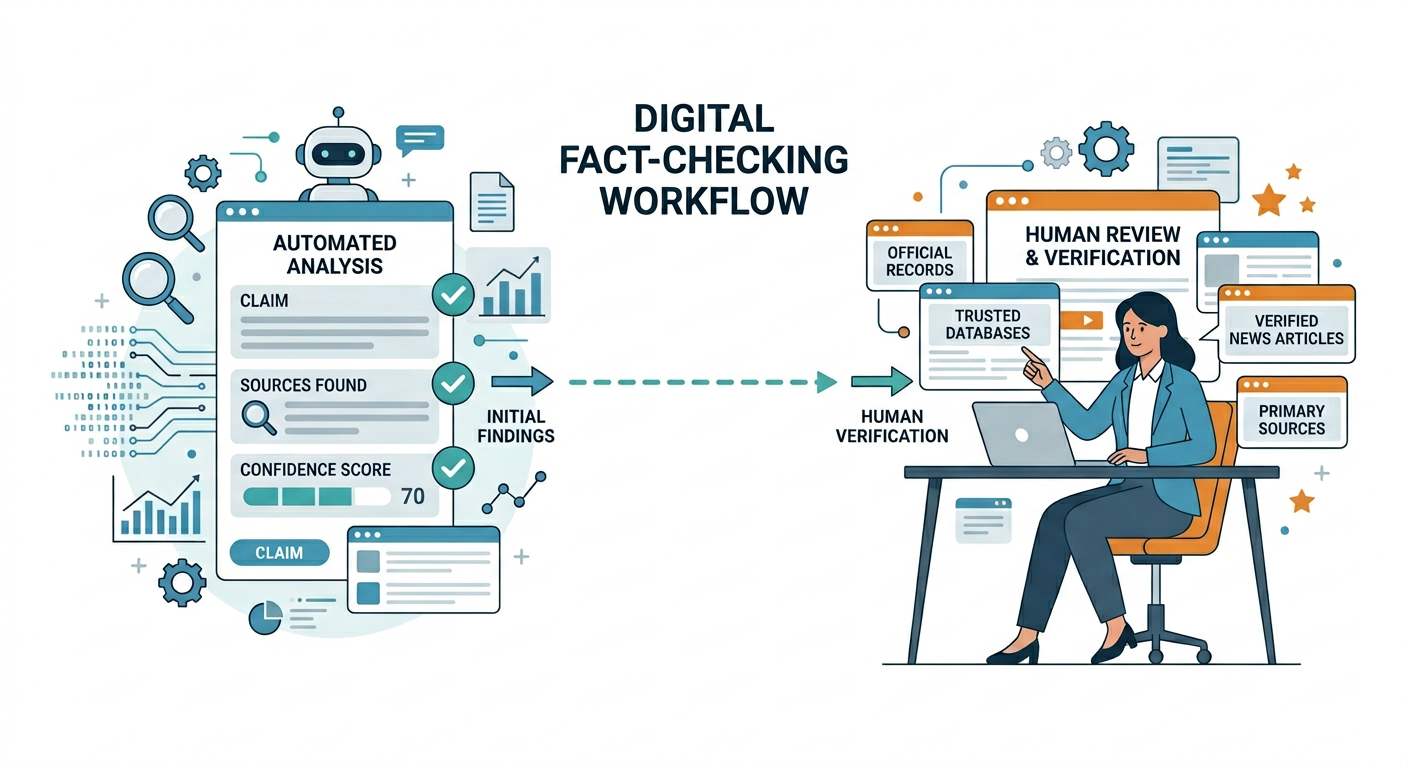

This is why AI can be very helpful and still unreliable without human review.

Common Types Of AI Hallucination

Fabricated facts

The model invents details that were never true, like dates, names, or statistics.

Fake citations

The AI may generate an article, paper, or source that sounds real but does not exist.

Context confusion

It may blend multiple topics together and create a misleading explanation.

Overconfident reasoning

The model gives a clear answer to a question that actually needs nuance or verification.

Visual hallucinations

In image generation, the model may create impossible objects, incorrect text, or distorted anatomy.

Why It Matters For SEO, Content, And Business Teams

If you publish content based on hallucinated information, the damage can be bigger than a simple typo. You can lose trust, hurt rankings, and confuse customers.

For agencies and website owners, this creates a real workflow issue. AI can speed up drafting, but it should not replace verification, especially for topics involving money, health, law, products, or technical SEO.

That is why teams building content systems should create review steps, fact-check processes, and internal guardrails before publishing. If you are improving your content workflow, a strong website audit can also help identify pages where weak facts or thin content may be holding performance back.

How To Spot AI Hallucination Before It Causes Problems

The good news is that hallucinations often leave clues. You just need to know what to look for.

Watch for:

- Numbers without a source

- Very specific claims that feel oddly neat

- Citations that cannot be verified

- Contradictory statements in the same answer

- Overly polished language with no real substance

- Answers that refuse to admit uncertainty

A practical habit is to ask, “Can I verify this somewhere trustworthy?” If the answer is no, don’t publish it as fact.

How To Reduce Hallucinations In Your Workflow

You cannot eliminate the risk completely, but you can reduce it a lot.

Use better prompts

Ask for uncertainty, sources, or step-by-step reasoning. The clearer the request, the less guesswork the model tends to do.

Verify important claims

Cross-check any statistic, quote, or technical detail before publishing.

Keep humans in the loop

For business content, human review is essential. AI should assist, not replace, judgment.

Limit high-risk use cases

Do not rely on AI alone for legal, medical, or financial content.

Build reusable review checklists

Simple editorial rules can catch many avoidable errors before they go live.

AI Hallucination Vs. Human Mistakes

Human writers make mistakes too, but AI hallucinations are different in a few ways. A human error is often a typo, a missed update, or a misunderstanding. An AI hallucination is usually a confident-sounding fabrication.

That difference matters because confidence can be misleading. Readers often trust polished language, even when the underlying claim is wrong.

Examples Of AI Hallucination In Real-World Use

Here are a few scenarios where it can show up:

- A marketer asks for blog statistics and gets a made-up number.

- A support bot gives a customer a policy that does not exist.

- A content tool invents a product feature.

- An AI assistant names the wrong company founder.

These are not rare edge cases. They are common enough that every team using AI should plan for them.

Frequently Asked Questions

What does AI hallucination mean?

It means an AI produces information that is false or unsupported, even though it sounds confident.

Is AI hallucination the same as lying?

Not exactly. Lying implies intent, while AI systems do not have intent in the human sense. They generate incorrect output without understanding truth the way people do.

Can hallucinations be completely prevented?

No, but they can be reduced with better prompts, verification, and human review.

Why do AI models sound so confident when they are wrong?

Because they are optimized to produce fluent, likely text. Fluency does not guarantee accuracy.

Are hallucinations only a problem with chatbots?

No. They can also appear in image generation, summarization, translation, and other AI tasks.

How should businesses handle hallucinations?

They should set review processes, verify important claims, and avoid publishing AI output without oversight.

Should I trust AI content at all?

Yes, but carefully. AI is useful for brainstorming and drafting, but factual claims should always be checked.

Build A Safer Content Process

If your team uses AI for content, marketing, or SEO, the goal is not to avoid it completely. The goal is to use it responsibly.

That means combining speed with verification. It also means using audits, editorial guidelines, and clear review steps so you catch errors before they reach customers or search engines.

If you want help improving content quality, technical performance, and AI visibility, explore AuditSky for audits designed to find issues that hurt growth.

Conclusion

To define AI hallucination simply, it is when an AI generates convincing but incorrect information. That can be a harmless annoyance in casual use, but it becomes a serious issue when accuracy matters.

The smartest approach is to treat AI as a fast assistant, not a final authority. Verify the details, review the output, and build a process that protects both trust and performance.